Noisy Occupancy Map with Zed Camera

Hi, I am trying to create an occupancy grid using a ZED stereo camera along with rtabmap_ros. My results are very noisy and I am wondering if the problem is with the ZED camera, or with my rtabmap_ros config, or both. Can anyone verify whether or not rtabmap can deal with this level of sensor noise.

Details:

- ZED camera calibrated using the calibration tool found in /usr/local/zed

- Running on NVIDIA TX1, Ubuntu 14.04, ROS Indigo

- Indoor well light room, ZED mounted on mobile robot being joysticked

Pictures:

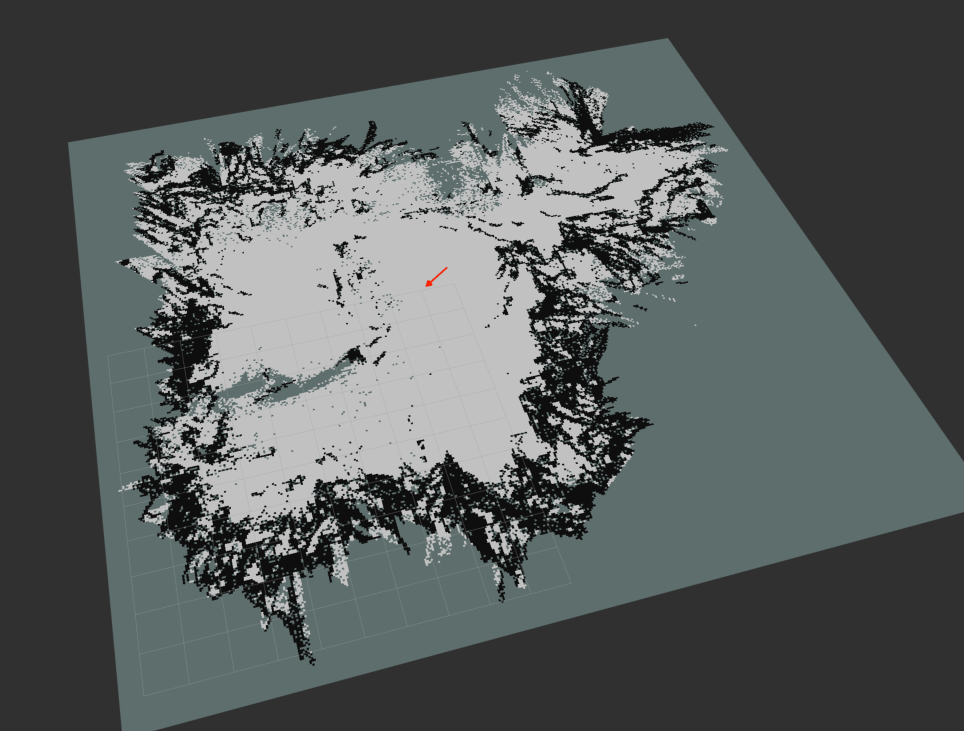

Here is a sample of a map I have generated

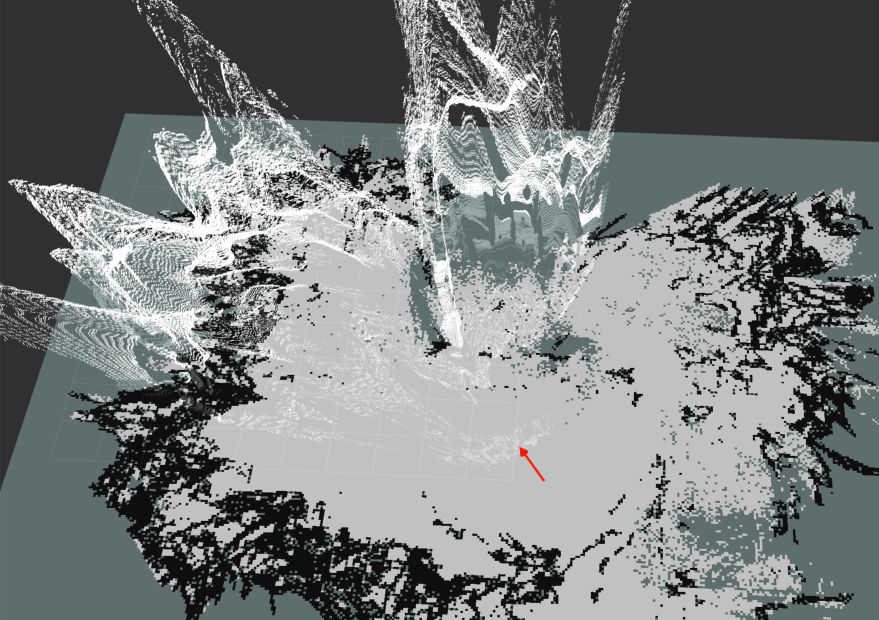

And here is an example of the depth cloud at one instant

As you can see the depth cloud is fairly noisy, but what sensor data isn't.

Launch File:

<launch>

<include file="$(find zed_wrapper)/launch/zed_tf.launch" />

<arg name="svo_file" default=""/>

<group ns="camera">

<node name="zed_wrapper_node" pkg="zed_wrapper" type="zed_wrapper_node" args="$(arg svo_file)" >

<param name="resolution" value="2" />

<param name="quality" value="1" />

<param name="sensing_mode" value="1" />

<param name="frame_rate" value="30" />

<param name="odometry_DB" value="" />

<param name="openni_depth_mode" value="0" />

<param name="rgb_topic" value="rgb/image_rect_color" />

<param name="rgb_cam_info_topic" value="rgb/camera_info" />

<param name="rgb_frame_id" value="/zed_link" />

<param name="left_topic" value="left/image_rect_color" />

<param name="left_cam_info_topic" value="left/camera_info" />

<param name="left_frame_id" value="/zed_link" />

<param name="right_topic" value="right/image_rect_color" />

<param name="right_cam_info_topic" value="right/camera_info" />

<param name="right_frame_id" value="/zed_link" />

<param name="depth_topic" value="depth/image_rect_color" />

<param name="depth_cam_info_topic" value="depth/camera_info" />

<param name="depth_frame_id" value="/zed_link" />

<param name="point_cloud_topic" value="point_cloud/cloud" />

<param name="cloud_frame_id" value="/zed_link" />

<param name="odometry_topic" value="odom" />

<param name="odometry_frame_id" value="/zed_link" />

<param name="odometry_transform_frame_id" value="/zed_tracked_frame" />

<param name="visual_odometry" value="false" />

<param name="odometry_topic" value="/odometry/filtered_map" />

</node>

</group>

<!--Visual SLAM: args: "delete_db_on_start" and "udebug" -->

<node name="rtabmap" pkg="rtabmap_ros" type="rtabmap" output="screen" args="--delete_db_on_start" >

<param name="frame_id" type="string" value="base_link"/>

<param name="subscribe_stereo" type="bool" value="false"/>

<param name="subscribe_depth" type="bool" value="true"/>

<remap from="rgb/image" to="/camera/rgb/image_rect_color"/>

<remap from="rgb/camera_info" to="/camera/rgb/camera_info"/>

<remap from="/depth/image" to="/camera/depth/image_rect_color"/>

<remap from="odom" to="/odometry/filtered_map"/>

<param name="queue_size" type="int" value="30"/>

<!-- RTAB-Map's parameters -->

<param name="Rtabmap/TimeThr" type="string" value="700"/>

<param name="Grid/DepthDecimation" type="string" value="4"/>

<param name="Grid/FlatObstacleDetected" type="string" value="true"/>

<param name="Kp/MaxFeatures" type="string" value="200"/>

<param name="Kp/MaxDepth" type="string" value="10"/>

<param name="Kp/DetectorStrategy" type="string" value="0"/> <!-- use SURF -->

<param name="SURF/HessianThreshold" type="string" value="1000"/>

<param name="Vis/EstimationType" type="string" value="0"/> <!-- 0=3D->3D, 1=3D->2D (PnP) -->

<param name="RGBD/LoopClosureReextractFeatures" type="string" value="true"/>

<param name="Vis/MaxDepth" type="string" value="10"/>

</node>

</launch>

Can you please tell in detailed steps me how I can run your launch file..since I am a novice thanks in advance

I got this launch file from here ( https://www.stereolabs.com/documentat... ). Follow the steps on that page to run the launch file. Let me know if you have any questions.

ZED (Init) >> WARNING: FPS is too low to enable positional tracking and spatial mapping. Consider using PERFORMANCE parameters found this error,fps-30,but usb is 2.0

In zed_camera.launch change the quality value to 1 if it isn't already. What computer are you running the ZED camera on? It needs a decent GPU to run.

That's Xeon 8gb, with nvidia gtx580 with gpu memory of 1.5gb memory

hi im also a colleague of ankursingh0820 i am running it on a jetson tx1.I still get the warning FPS is too low.But there is no output generated