Why such a bad map using a depth camera?

I recently bought D435 (Intel realsense) and used rtabmap package to perfrom SLAM.

I simply used this roslaunch:

http://wiki.ros.org/rtabmap_ros/Tutor...

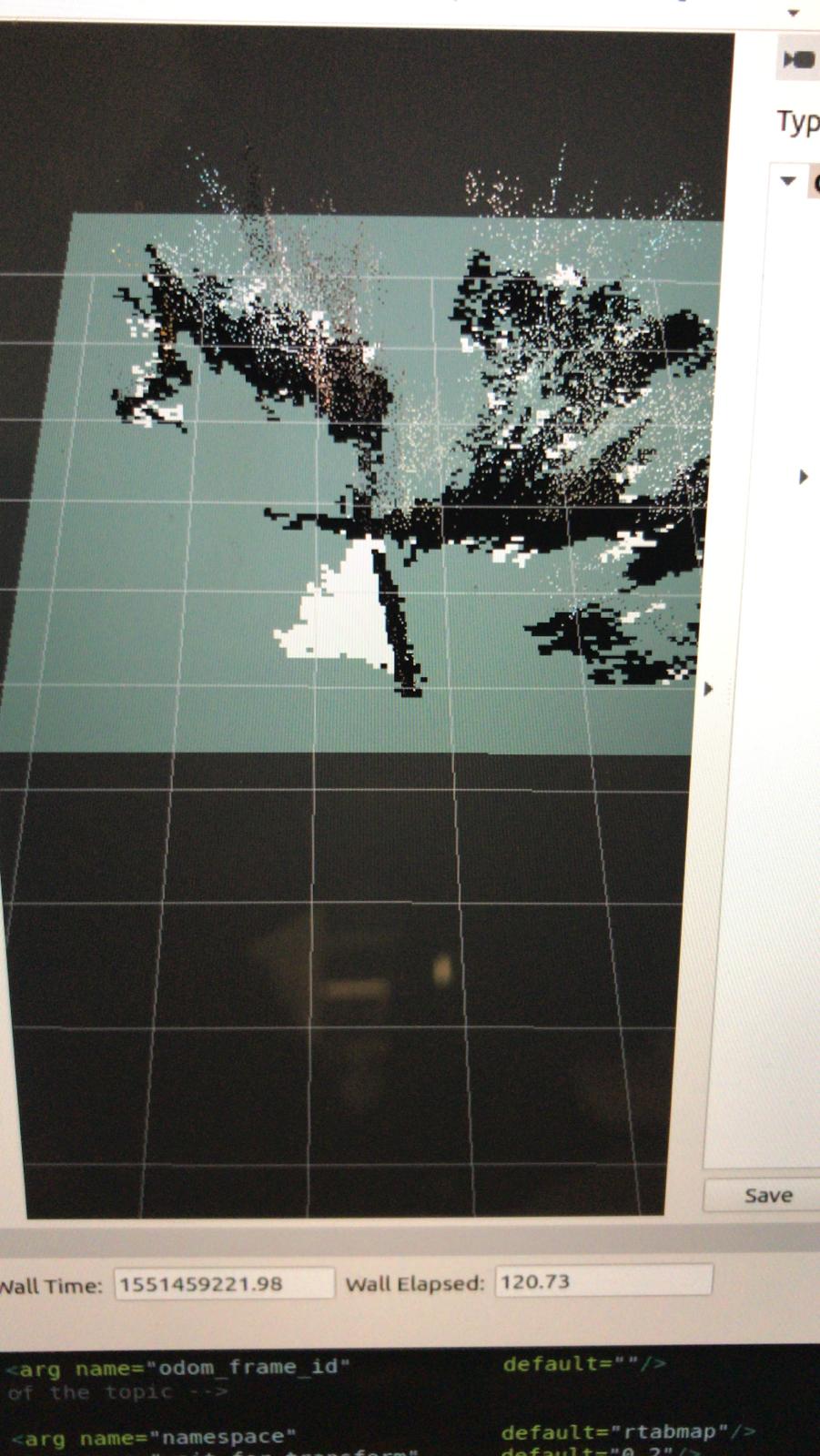

And the result is this:

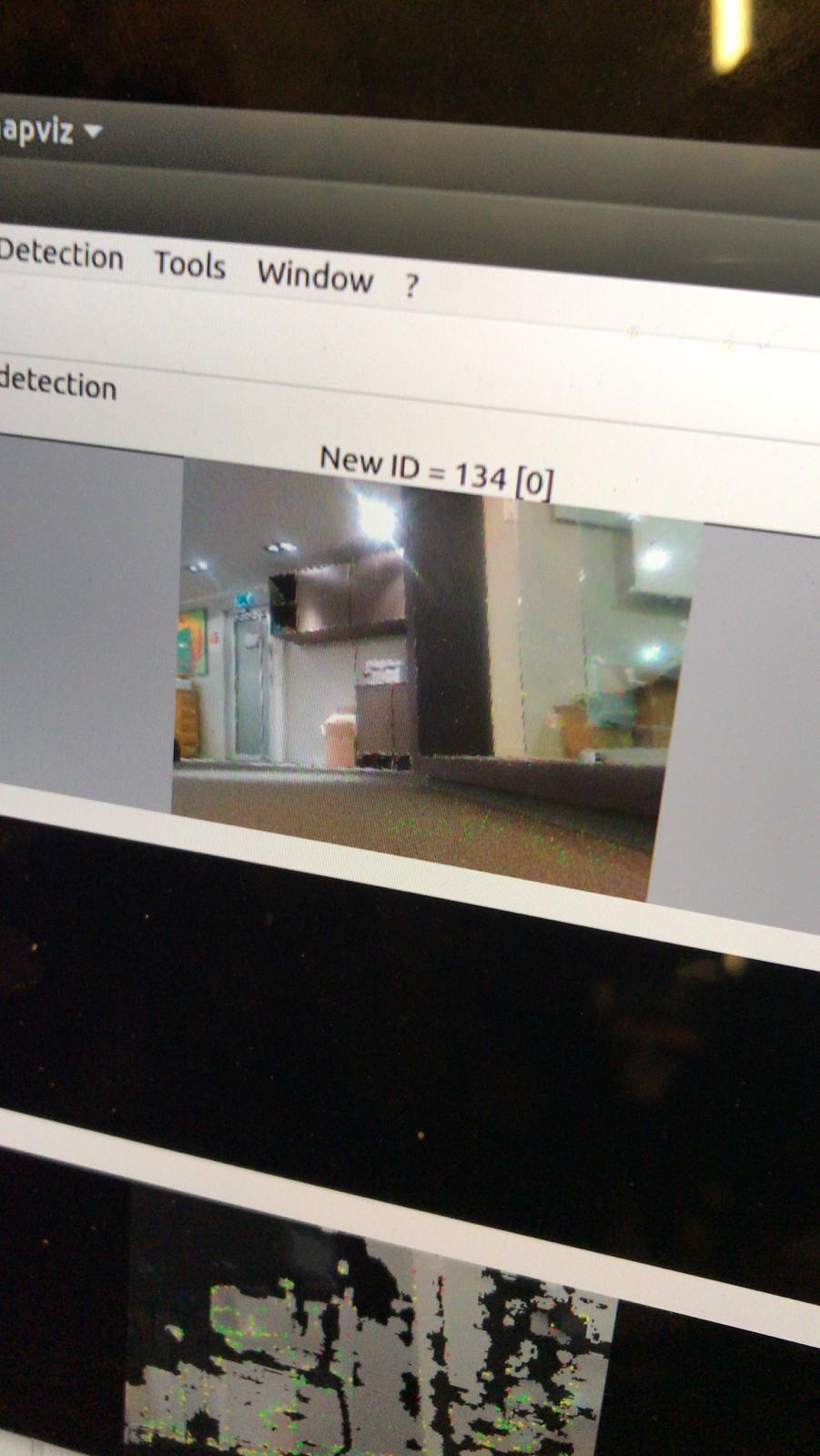

The environment is this:

Any ideas?

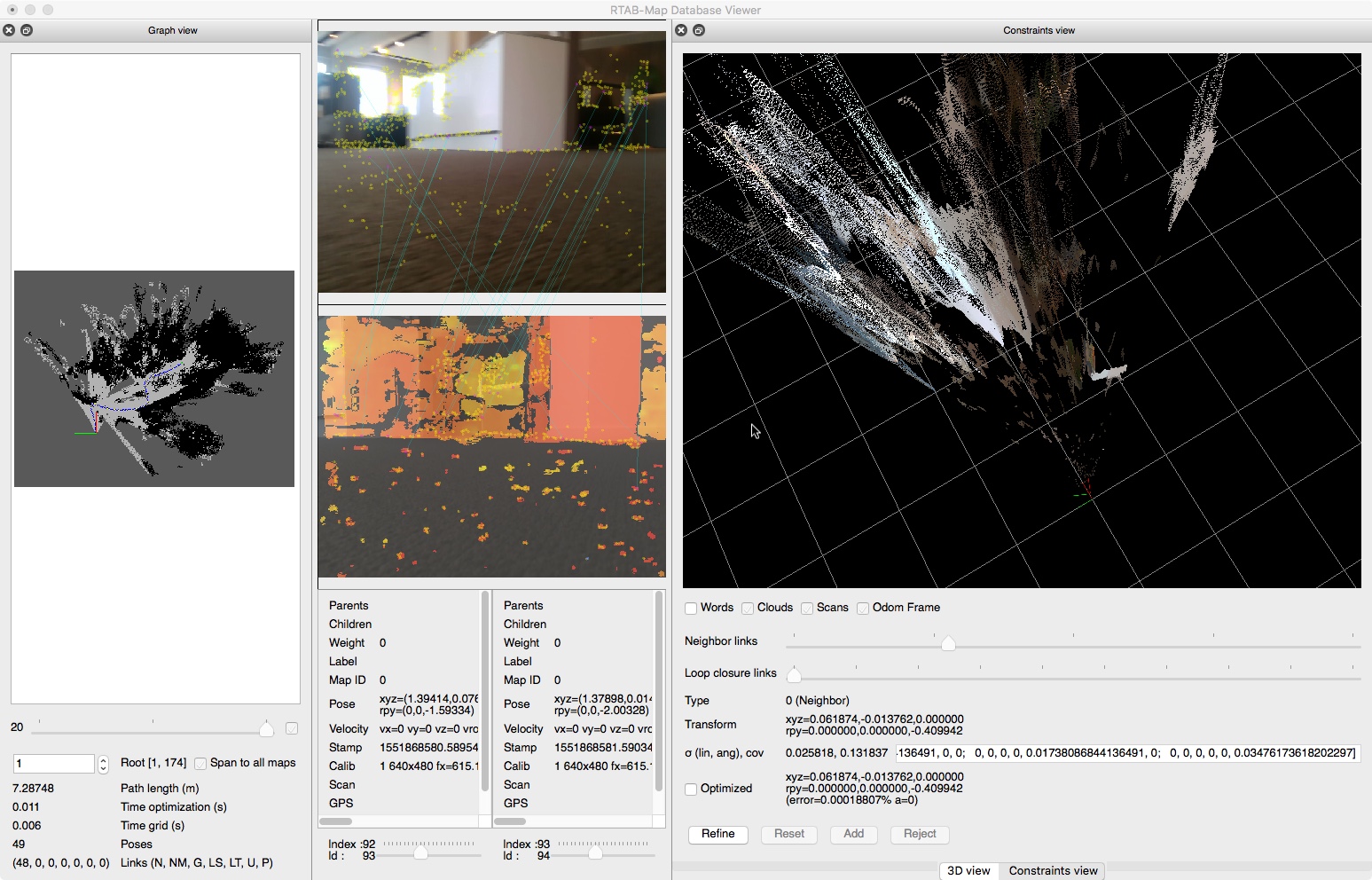

DB:

Was this running on a real robot? Not in gazebo?

@kitkatme Yes, but worth to note that I just used my camera without any external odometry.

Is the camera immobile or you moved the camera? What kind of trajectory? If the camera is not moving, the noise in the cloud is coming from the sensor.

@matlabbe I did move the camera forward

Can you share the resulting database? (default ~/.ros/rtabmap.db)

@matlabbe I have added DB