cannot transform laser using laser_assembler

I've successfully used laser_assembler to convert laser scans to pointclouds. However, when viewing the concatenated clouds in rviz they are not de-rotated (projected) accordingly with the fixed frame I've chosen. Pictures:

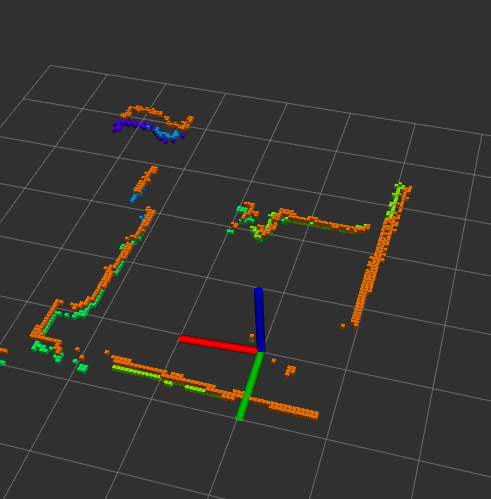

initially (laser is started):

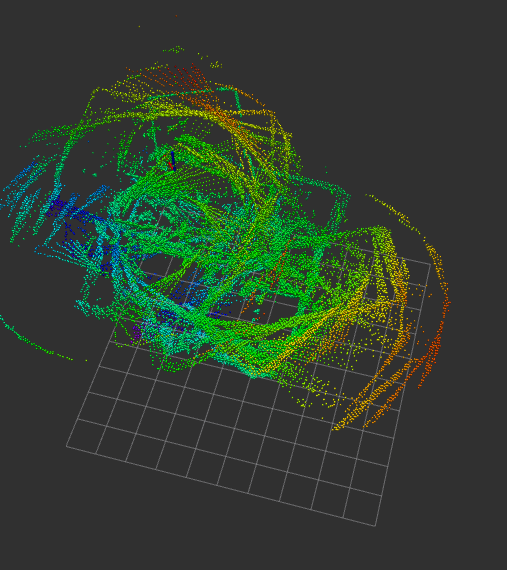

After moving the LiDAR around:

Even though octomap progresses correctly in terms of absolute position (x,y,z), it strikes out when it comes to the angles.

Launch file:

<launch>

<node pkg="laser_assembler" type="laser_scan_assembler" output="screen" name="laser_scan_assembler">

<param name="tf_cache_time_secs" type="double" value="10.0" />

<param name="max_scans" type="int" value="400" />

<param name="ignore_laser_skew" type="bool" value="true" />

<param name="fixed_frame" type="string" value="base_link" />

</node>

<node pkg="laser_assembler" type="periodic_snapshotter" output="screen" name="periodic_snapshotter"/>

<node pkg="IMUEstimate" type="IMUEstimate" name="IMUEstimate" />

<node pkg="octomap_server" type="octomap_server_node" name="octomap_server">

<param name="resolution" value="0.05" />

<param name="latch" value="false" />

<!--param name="base_frame_id" value="base_link" /-->

<!-- fixed map frame (set to 'map' if SLAM or localization running!) -->

<param name="frame_id" type="string" value="map" />

<!-- maximum range to integrate (speedup!) -->

<param name="sensor_model/max_range" value="10.0" />

<!-- data source to integrate (PointCloud2) -->

<remap from="cloud_in" to="/assembled_cloud2_out" />

</node>

</launch>

I've tried every possible combination of fixed_frame and frame_id for laser_assembler and octomap respectively, but the result is always the same, either I get a 2D or a 3D octomap that gets rotated by the laser's unprojected frame. I've even pulled in pcl_ros and modified the periodic_snapshotter to transform the resulting pointcloud but no cigar. Here is the code for your review. I tried all combinations possible for the transforms so it's nothing about that (the rest of periodict_snapshotter.cpp is the same):

// Make the service call

if (client_.call(srv)) {

tf::StampedTransform localTransform;

try {

listener_.lookupTransform("scanmatcher_frame", "map",

ros::Time(0), localTransform);

}

catch (tf::TransformException &ex)

// If the tf listener cannot find the transform, print an error and continue

{

ROS_ERROR("Error: %s", ex.what());

}

// Transform the point cloud from the map_world frame into the base_footprint frame

sensor_msgs::convertPointCloudToPointCloud2(srv.response.cloud, initialCloud2);

pcl_ros::transformPointCloud("map", localTransform, initialCloud2,

transformedCloud2);

ROS_INFO("Published Cloud with %u points",

(uint32_t)(srv.response.cloud.points.size()));

transformedCloud2.header.frame_id = "map";

pub_.publish(transformedCloud2);

}

Any ideas? I feel like I'm super closed but I just don't know what's going on.

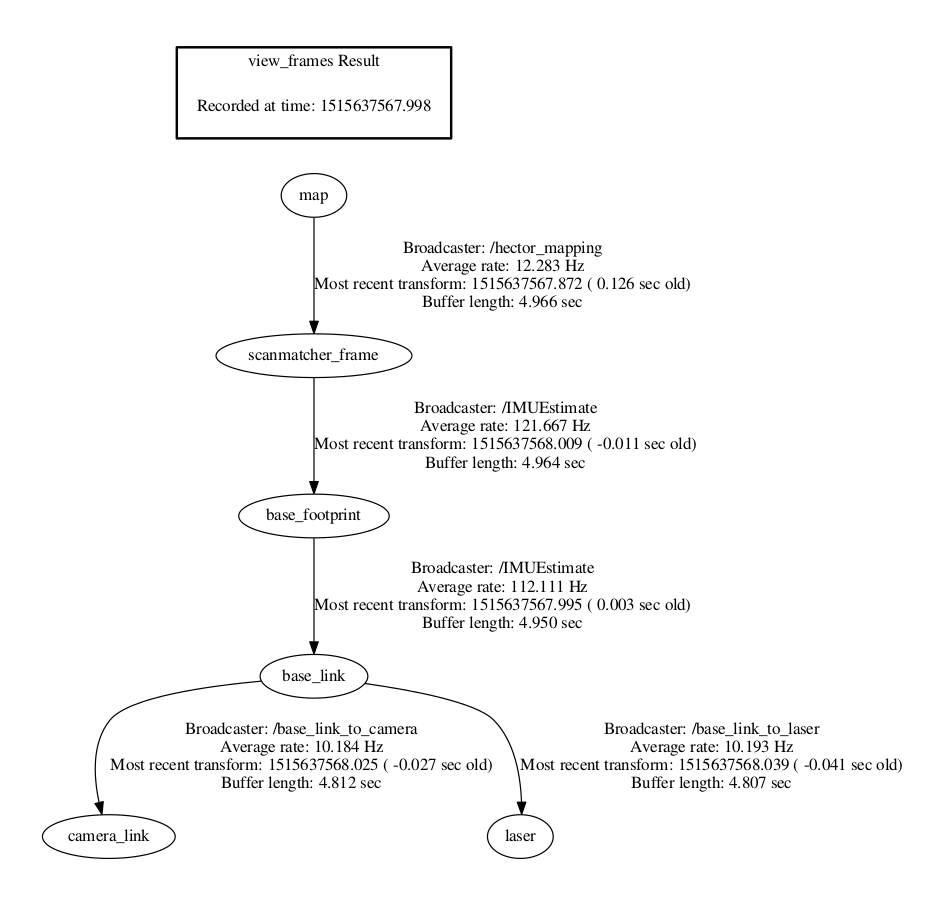

UPDATE, here is my tf tree:

Do you have

tfset up correctly? I.e. is the laser moved with respect to yourfixed_frame(which isbase_link) and you know and publish viatfhow this is moved. Otherwise, thelaser_assemblerdoes not know how to put the single readings together...Yes, I have a static tf publisher from base link to laser. I’ll post the tf tree in a bit so you could take a look for yourself. I would assume this is correct, right?

just to clarify some more questions:

Yup, the laser is not moving w.r.t the base_link, and I am moving the robot around. Base_footprint is fixed w.r.t to base_link, but it does not have any orientation transforms applied to it. The scanmatcher_frame w.r.t base_footprint moves correctly.

I feel like the community could have a use for this , but if you don't know it's ok, I'll probably just try something else.