Robot coordinates in map

Hi,

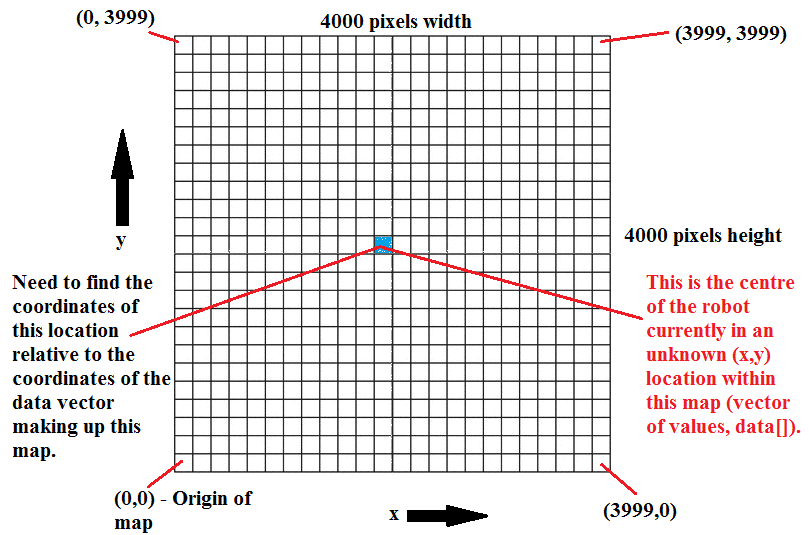

I am developing an exploration algorithm based on frontier exploration. In my code, I am subscribing to the topic /map and then I am processing the data[] vector that I obtain from that topic messages. On subscribing to /map I get a 4000 x 4000 pixels map (i.e. height = width = 4000 pixels). Each pixel has its own x and y coordinate and I am using the pixel values (0, +100 or -1) in order to extract the (x,y) coordinates of the free cells (no obstacles) that are adjacent to unknown cells (cells not yet explored).

Now I need to get the robot location in (x,y) coordinates within that map. This means that I must have some (x,y) coordinates within the 4000 x 4000 map, i.e. within the vector data[] that is returned by /map. I have tried to listen in to the transform between /map and /odom but once I get the robot pose with respect to the map (i.e. according to the transform), it is still not making a lot of sense. I am following this code and my robot pose output is of the following nature:

position:

x: -9.87982119798

y: 16.8167273361

z: 0.0

orientation:

x: 0.0

y: 0.0

z: 0.627633768897

w: 0.778508736072

For me, this is not making much sense because I would need some (x,y) value that corresponds to some (x,y) coordinate in the map. This image might help you understand what I mean and the kind of result I'm after.

Thank you in advance.

Hi sobot. What i did was I iterated through every value in the data[] vector and used the index used in the map_saver code in order to extract the (x,y) coordinates. If the value in data[] is 0 -> free cell, if 100 -> occupied, else it is unknown. The key is to have two nested loops, one for y and x

For the record, the outer loop goes through the y coordinates and the inner loop through the x coordinates. Then to index data[] use index i = x + (map.info.height - y -1)*map.info.width

Thanks so much for your reply. i got the idea behind it. though since i'm quite new to programming its a bit complicated for me to write it, do you have the code snip uploaded somewhere so i can take look at it and adapt it to my application? PS: i got code for Yamauchi frontier explr if u stil need

Here's a snippet of my work. Sorry could not share it all but it's still works in progress. Can you share the code you got for Yamauchi frontier exploration please? Would like to have a look. Thanks!

Here's the Planner function of the Yamauchi based frontier proj. that i have Planner, hope that's answers your question. i'll take a look at ur code snip... thanks for that mate.

so you are incorporating the path planning function with the frontier exploration algorithm all in one?

finding target and planning happens all in one node. you maybe interested in another part of the code too. here is the link to the target finding that happens under a mapCallback function. got the snips to help my navigation cause though

I was going to use the move_base package for navigation. How does that sound?