I'll add this as an answer now:

The problem you have is (probably) that you visualize everything in rviz with respect to the base_link, i.e. the robot. This is why it looks like only the wheels are spinning in rviz. Thus, you have to set the fixed_frame to something that is fixed in the world.

You could use the odometry frame (usually odom) for getting better behaviour. Therein, the motion of the robot is accumulated as seen from internal sensors (wheel encoders, IMU, ...). However, those sensors are not accurate over time, so you'll receive a drift there as well.

Best way would be have a localization running and use the global frame as reference (usually map). You can use e.g. amcl for this, but you require a map. You could also write (or use, if one already exists) a node that directly takes the real world pose from gazebo and publishes the respective transfrom (map -> odom). There is the fake_localization package, that might be suitable for your usecase.

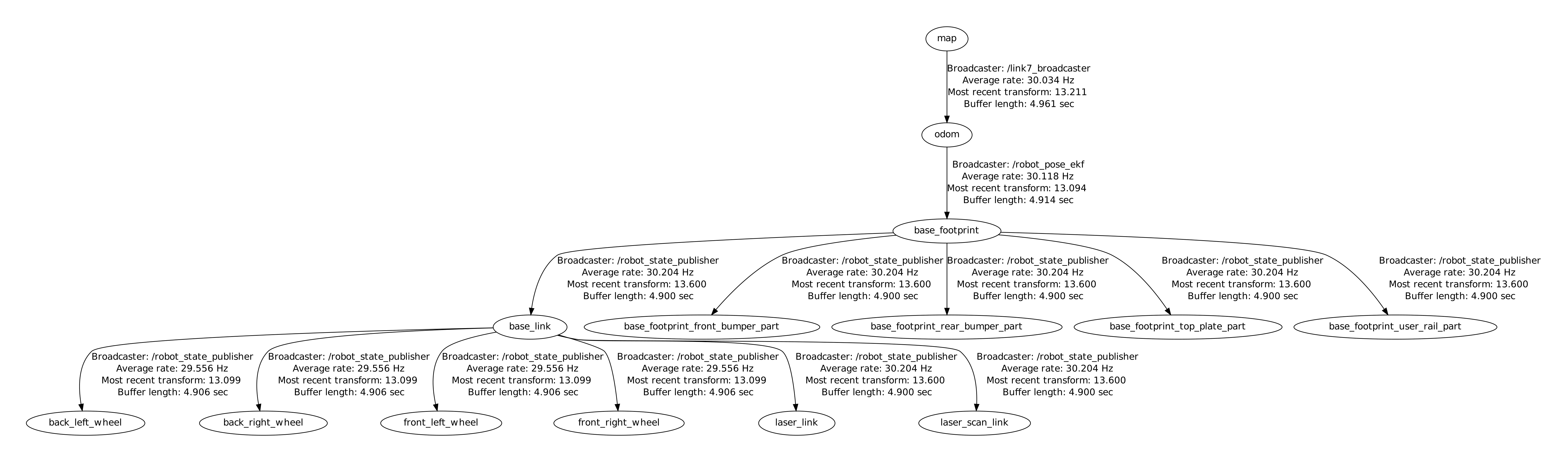

If you want to get information about your current transform (tf) tree, you can use rosrun tf view_frames which produces a PDF with the respective information. Or use rosrun rqt_tf_tree rqt_tf_tree which provides the same output but more dynamically and not in a PDF.

Do you have, in

rvizset thefixed_frametomap? If it isbase_link, you'll see everything relative to the robot, thus the wheels spinning, but the robot not "moving". You obviously need to have some kind of localization for this...Hi @mig so currently my fixed_frame is set to base_link but do you mean I should change it to map and also I need localization or I only need to change the fixed_frame to map ?