Integrate free space into octomap

Hello,

I am looking into how octomap integrates information from PointCloud2 into the Octree structure of octomap.

Specifically for free space coming from a LIDAR. Let's take the velodyne_pointcloud sensor simulator for example. Is there a way to take into account the laser beams that reported no obstacles as free space?

I give a more general explanation below. But after reading the code for the octomap_server, at OctomapServer.cpp does the last else of the insertScan method insert free space of rays that make it all the way through here? Am I correct in my interpretation that laser beams that have not reached any obstacle are marked as NaN?

I understand that for each point in the point cloud the space between the origin and the point is marked as free.

Is there a way to mark as free space where there is no obstacle to generate the point in the point cloud?

As there are few obstacles in the world I am using, the part of the world completely free stays as unexplored.

It's easier to see graphically.

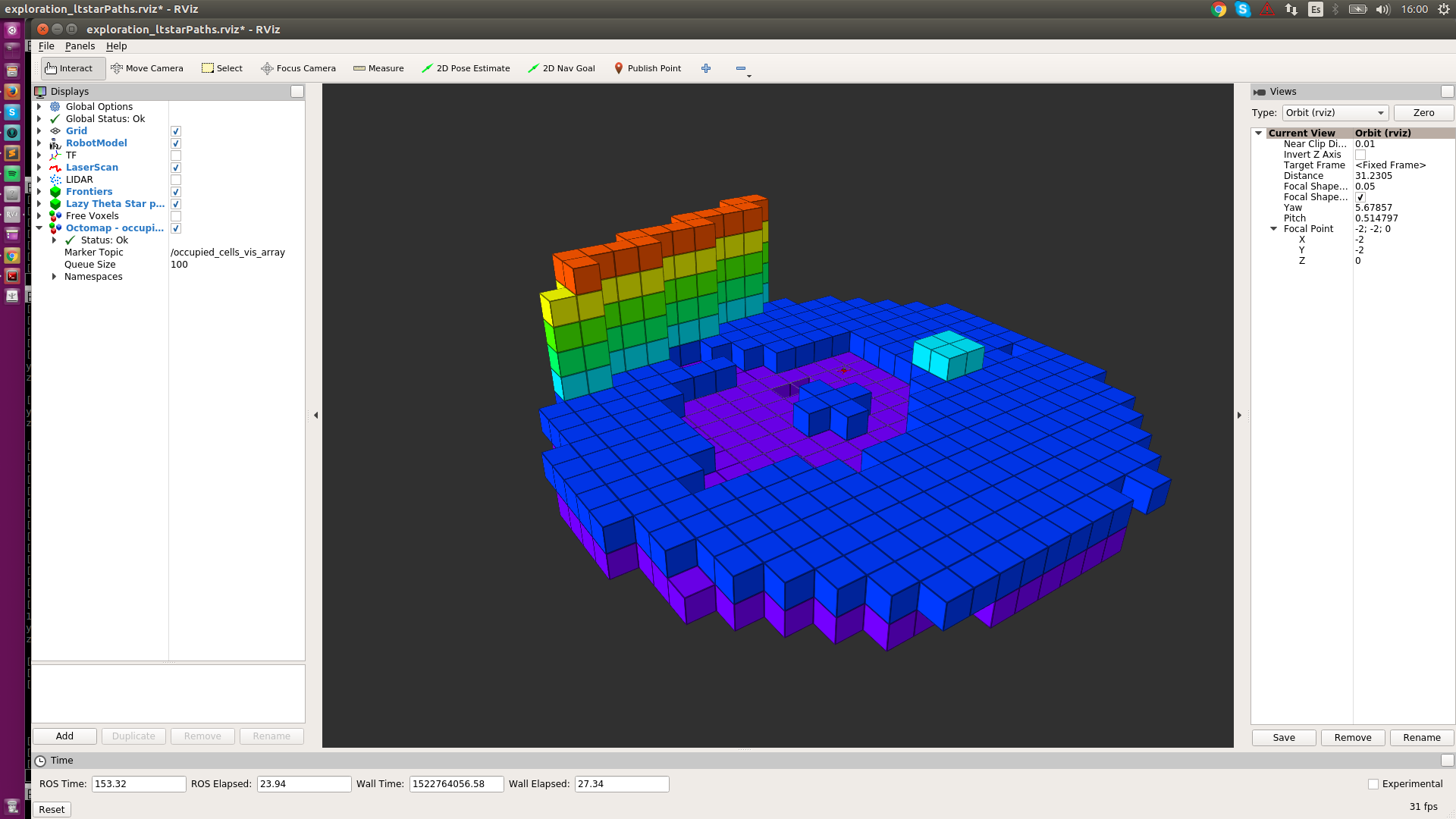

Here is the occupied space with the ground, a wall, and a minor obstacle:

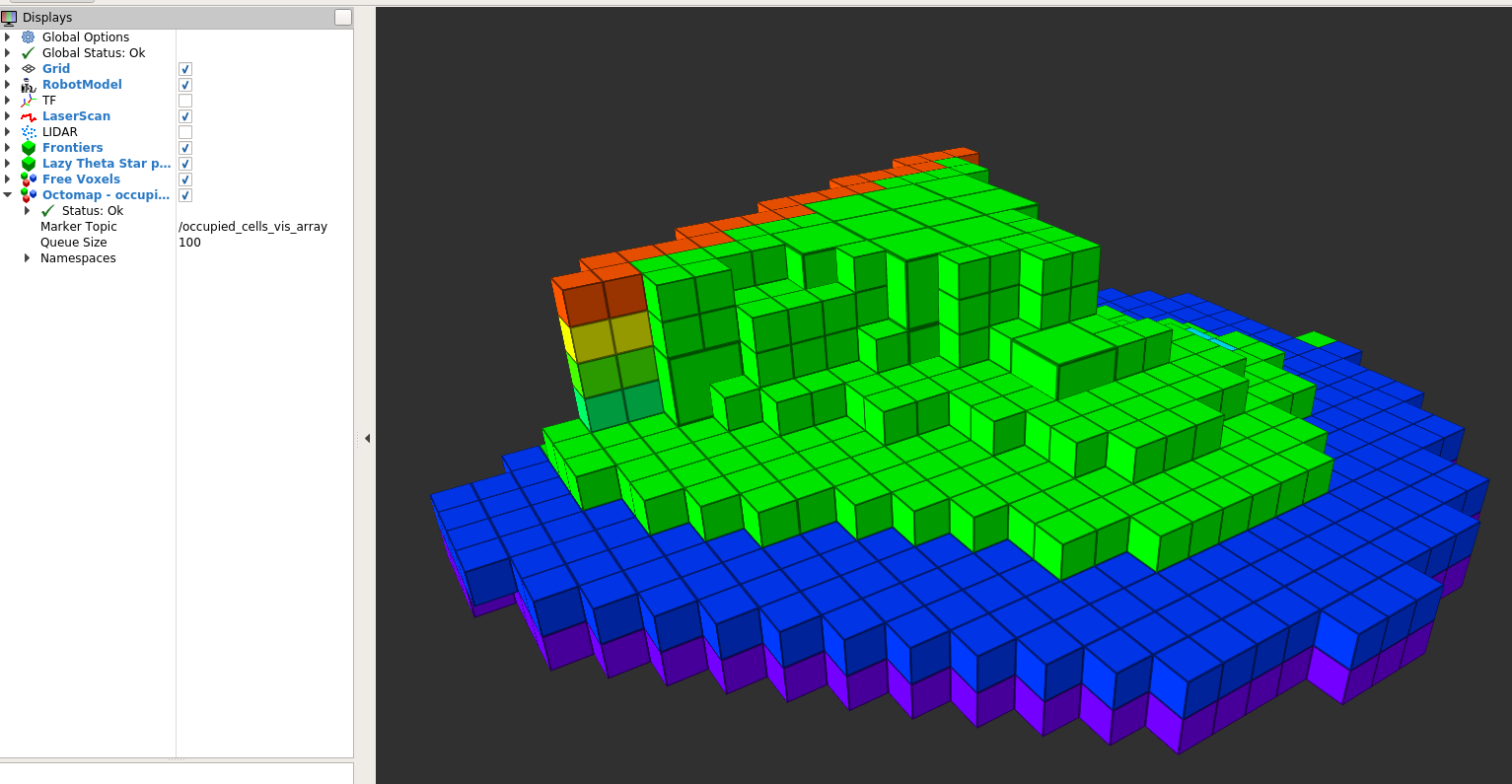

The robot is at the center of the purple "circle". Try to memorize the position relative to the wall - is several maximum resolution voxels away. The corresponding free space (from the same perspective) is here in green:

After paying a bit of attention, the image shows that there is much more explored, free space between the UAV and the wall than in any other direction.

This could just be because of the sensor capabilities/position. However, the sensor is a velodyne (360º sensing). Plus the robot had just spin on himself (yaw spin) to overcome any sensor issue.

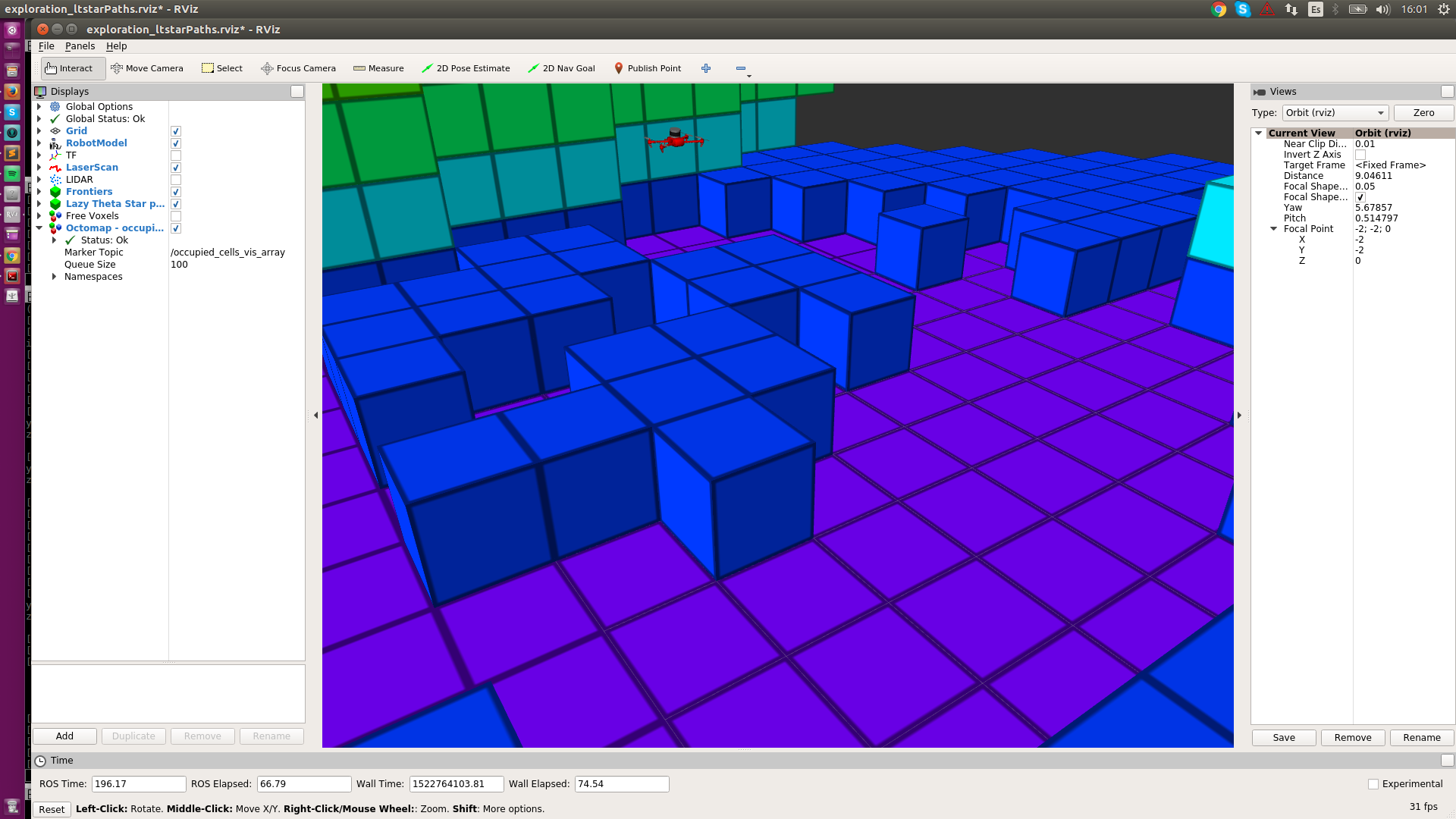

Here is a closer view of the sensor:

I'm not sure if this is just octomap being designed to work only with the points of the point cloud or if there is some parameter that I tune to change the data integration behavior.