How to Improve Inaccurate ROS Timer

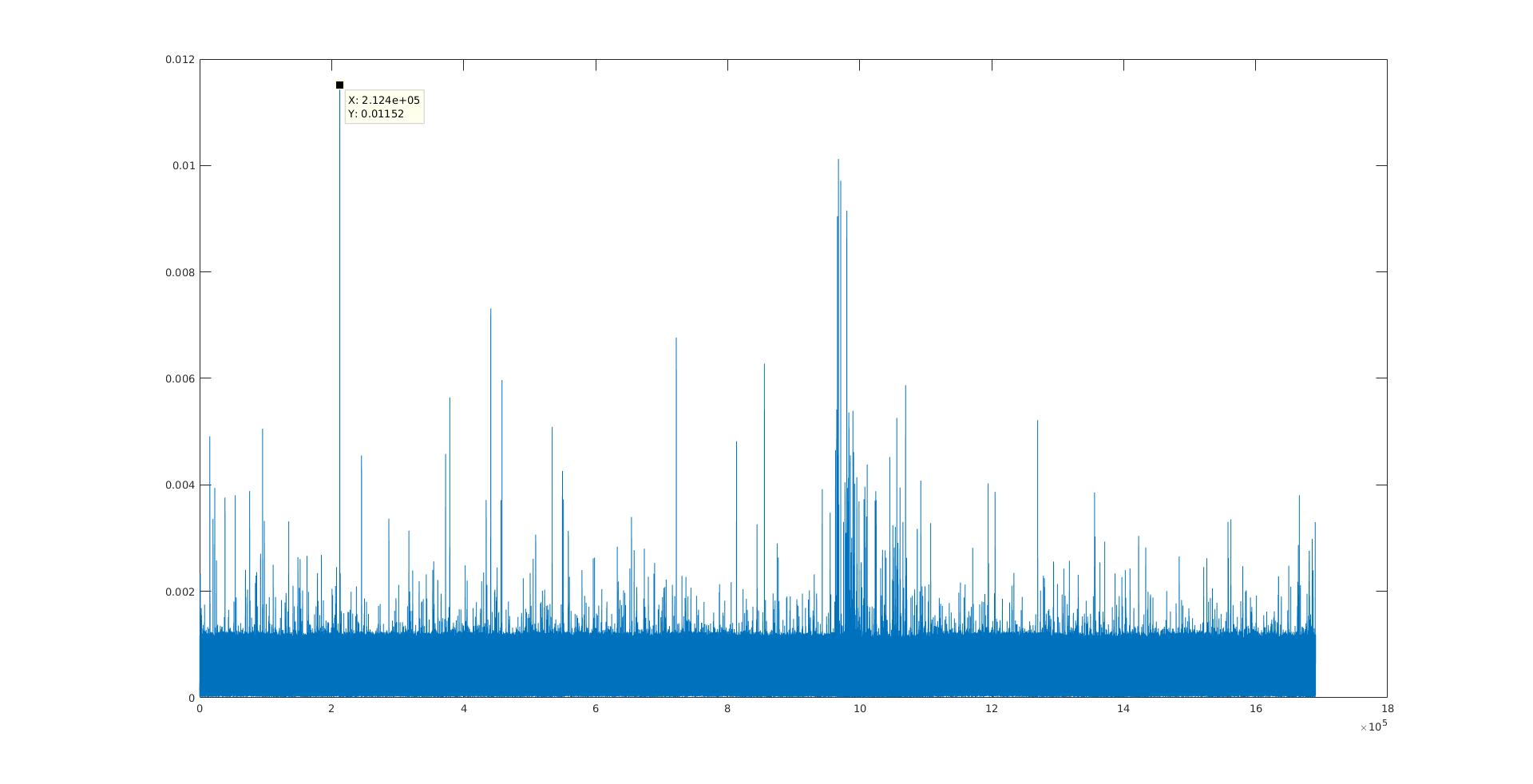

I use a ROS timer in my progarm and plot the difference between the expected event time and the real event time as follows: event.current_real - event.current_expected;. However, I plot the data and find that the ROS Timer is NOT accurate AT ALL. In my program, the timer period is set to 0.002 second, but the largest error (0.01152 second) could be as large as more than 5.7 times of that. The plot is shown below:

I know from ROS wiki that ROS Timer does no intend to be accurate and is suject to the system load and other factors. So what I want to know is how to make it as accurate as possible? Is there any alternative method to substitute the ROS Timer?

I am using ROS Indigo and Ubuntu 14.04. The source code is listed below:

#include "ros/ros.h"

#include <fstream>

using namespace std;

std::ofstream time_file;

void callback1(const ros::TimerEvent& event)

{

ros::Duration error_dur = event.current_real - event.current_expected;

time_file<<error_dur.toSec()<<endl;

}

int main(int argc, char **argv)

{

time_file.open("time_file.txt");

time_file<<"error_dur"<<endl;

ros::init(argc, argv, "talker");

ros::NodeHandle n;

ros::Timer timer1 = n.createTimer(ros::Duration(0.002), callback1);

ros::spin();

if(!ros::ok())

time_file.close();

return 0;

}

Simply ROS is not real time. This post probably would give you some idea why: http://answers.ros.org/question/13455... There is also some new approach in ROS 2 regarding "real-time" aspect: http://design.ros2.org/articles/realt...