Rostopic Hz inaccurate?

Hello everyone.

Sometimes when I measure the frequency of messages in a topic, the numbers printed don't make sense.

For example:

rostopic hz /robot/head/head_state

gives:

subscribed to [/robot/head/head_state]

average rate: 100.000

min: 0.007s max: 0.015s std dev: 0.00097s window: 96

average rate: 100.000

min: 0.004s max: 0.016s std dev: 0.00111s window: 192

average rate: 100.000

min: 0.004s max: 0.016s std dev: 0.00100s window: 289

average rate: 100.000

min: 0.004s max: 0.016s std dev: 0.00112s window: 386

average rate: 100.000

min: 0.004s max: 0.016s std dev: 0.00105s window: 483

average rate: 100.000

min: 0.004s max: 0.017s std dev: 0.00109s window: 581

average rate: 100.000

min: 0.004s max: 0.017s std dev: 0.00116s window: 678

average rate: 100.000

min: 0.004s max: 0.017s std dev: 0.00115s window: 776

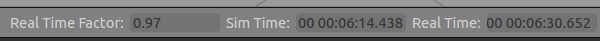

So it measures 776 messages in 8 seconds and still tells me that the average rate is 100.000? My calculater tells me that there are 776 messages over 8 seconds -> = 97Hz. Why aren't the numbers in my terminal not corresponding with this?